CS 111 Lecture: Working with different file formats¶

In this lecture notebook, we will discuss two new formats, JSON and CSV, as well as the corresponding Python modules, json and csv, which make it really easy to manipulate these formats. While both JSON and CSV files can be viewed as TEXT files (and opened with text editors), we will show that it is more efficient to use specialized modules to read and write data in such formats.

Table of Contents

1. Reading and writing dictionaries from/to text files¶

In the notebook folder you will find the file us-states.txt, which looks like this:

Alabama is home to 4921532 people.

Alaska is home to 731158 people.

Arizona is home to 7421401 people.

Arkansas is home to 3030522 people.

California is home to 39368078 people.

Colorado is home to 5807719 people.At the end of the notebook, we have left it as an exercise for you to read the content of this file into a dictionary, statesDct. For the moment, we will assume that you wrote the code and that the variable statesDct is assigned the dictionary that was returned from the file. Currently it has this data:

statesDct = {'Alabama': 4921532, 'Alaska': 731158, 'Arizona': 7421401, 'Arkansas': 3030522,

'California': 39368078, 'Colorado': 5807719, 'Connecticut': 3557006, 'Delaware': 986809,

'Florida': 21733312, 'Georgia': 10710017, 'Hawaii': 1407006, 'Idaho': 1826913,

'Illinois': 12587530, 'Indiana': 6754953, 'Iowa': 3163561, 'Kansas': 2913805,

'Kentucky': 4477251, 'Louisiana': 4645318, 'Maine': 1350141, 'Maryland': 6055802,

'Massachusetts': 6893574, 'Michigan': 9966555, 'Minnesota': 5657342, 'Mississippi': 2966786,

'Missouri': 6151548, 'Montana': 1080577, 'Nebraska': 1937552, 'Nevada': 3138259,

'New Hampshire': 1366275, 'New Jersey': 8882371, 'New Mexico': 2106319, 'New York': 19336776,

'North Carolina': 10600823, 'North Dakota': 765309, 'Ohio': 11693217, 'Oklahoma': 3980783,

'Oregon': 4241507, 'Pennsylvania': 12783254, 'Rhode Island': 1057125, 'South Carolina': 5218040,

'South Dakota': 892717, 'Tennessee': 6886834, 'Texas': 29360759, 'Utah': 3249879, 'Vermont': 623347,

'Virginia': 8590563, 'Washington': 7693612, 'West Virginia': 1784787, 'Wisconsin': 5832655,

'Wyoming': 582328}

Can we save this dictionary into a new file, so that we can easily reuse it in the future?

We can try to use the file method write that we have already seen:

with open('statesPopulation.txt', 'w') as outFile: # open file for writing

outFile.write(statesDct) # write the entire dictionary

This fails, because we can only write string values into a file with the write method.

We can try to convert our dictionary into a string before writing into a file:

with open('statesPopulation.txt', 'w') as outFile:

outFile.write(str(statesDct))

This seems to work, but let's open the content of the file statesPopulation.txt to check its content.

more statesPopulation.txt

We verified the succes of our approach. Now, can we read this dictionary from the file into a variable again?

with open('statesPopulation.txt', 'r') as inputFile:

statesDct2 = inputFile.read()

This also worked. But, what is stored in statesDct2?

type(statesDct2)

statesDct2

A string is not what we need, because it will not recognize the dictionary keys.

For example, can you anticipate the error that the following expression will generate?

statesDct2['Alabama']

We could try to convert this sring to a dictionary, using the built-in function dict:

statesDct3 = dict(statesDct2)

This fails with a ValueError because the function dict expects a sequence (e.g a list, a tuple) where each element has length of two, meaning it is composed of two items (the first one to serve as a key and the second one as a value). Below we see a correct example of converting a list of tuples into a dictionary:

dict([('Alabama', 4921532), ('Alaska', 731158)])

A tuple of tuples can also be converted into a dictionary:

dict((('a', 1), ('b', 2), ('c', 3)))

But, let's go back to our problem: how to read the content of statesPopulation.txt into a dictionary? Right now, we are only able to read its content as a string value:

statesDct2

We could try to do some string splitting and value conversion:

split1 = statesDct2.split(', ') # we know this gives us a list

split1[:5]

Then, we will need to split each item of this list at the colon:

split2Lst = []

for item in split1:

split2 = item.split(': ')

split2Lst.append(split2)

split2Lst[:3] # first three items

split2Lst[-3:] # last three items

Alternatively, we can use list comprehension to do the same thing, but with less code:

split2Lst = [item.split(': ') for item in split1]

split2Lst[:3]

Overall, we are getting closer, but there is still some work to do:

- the state names have single quotes as part of their names

- the numbers are strings instead of integers

- the first item has an extra '{' character we need to remove ("{'Alabama'")

- the very last item has an extra '}' that we need to remove ('582328}')

Having to do this process with every text file that stores a dictionary is tedious. This is where existing Python libraries (modules) come to rescue. They have functions that take care of all the details for us.

2. The JSON module to read/write dictionaries¶

Python provides a dedicated module, json, to manipulate data in the JSON format. JSON is a standard format for encoding as a string (possibly nested) lists and dictionaries whose elements are numbers/strings/booleans.

import json

2.1 Writing a JSON file¶

This is somewhat similar to writing into a text file, but instead of the method write, we are using the json's module function dump to write the content of our variable into the file object. Because this function doesn't write data line by line, the process of transferring the content into the file is seen as a "dumping" process (the whole content is dumped as a big lump).

with open('statesPopulation.json', 'w') as outF:

json.dump(statesDct, outF)

Check that the file was created:

ls -al

Let's view the file:

more statesPopulation.json

2.2 Reading a JSON file¶

This is again similar to reading a text file with the method read, but instead we have a special function, titled load.

with open('statesPopulation.json', 'r') as inF:

statesDct2 = json.load(inF)

Another difference worth pointing out is that load is a function in the module json, it is not a method for the file object. This is reflected in the way they are used with respect to the file object:

inF.read()- call the method read of the objectinFjson.load(inF)- invoke the functionloadin thejsonmodule and pass to it as argument the objectinF

Let's now go back to the data we read from the file. What type is it?

type(statesDct2)

It's a dict type! Also, let's compare it to the original dictionary:

statesDct == statesDct2

We successfully moved data from the notebook to a JSON file and back, without any loss in representation.

2.3 JSON Summary¶

We saw two functions of the json module, which operate on JSON formatted data:

json.dump(statesDct, outF)# takes two arguments, the value to be stored and the file object. It does not return a value.json.load(inF)# takes one argument only, the file object from which to read the content. It returns the content of the file in a JSON format (typically a dictionary or a list of nested items).

2.4 Real-world example: Tweets are stored in JSON¶

Tweets "travel" in JSON format from the Twitter server to its millions of users' devices. Here is what a typical tweet looks like in JSON format:

oneTweet = \

{"created_at": "Thu Apr 06 15:24:15 +0000 2017",

"id_str": "850006245121695744",

"text": "1/ Today we\u2019re sharing our vision for the future of the Twitter API platform!\nhttps://t.co/XweGngmxlP",

"user": {

"id": 2244994945,

"name": "Twitter Dev",

"screen_name": "TwitterDev",

"location": "Internet",

"url": "https://dev.twitter.com/",

"description": "Your official source for Twitter Platform news, updates & events. Need technical help? Visit https://twittercommunity.com/ \u2328\ufe0f #TapIntoTwitter"

},

"place": {

},

"entities": {

"hashtags": [

],

"urls": [

{

"url": "https://t.co/XweGngmxlP",

"unwound": {

"url": "https://cards.twitter.com\cards\18ce53wgo4h\3xo1c",

"title": "Building the Future of the Twitter API Platform"

}

}

],

"user_mentions": [

]

}

}

We can then access its parts as we do for a usual dictionary:

oneTweet["text"]

Items are nested:

oneTweet["user"]["screen_name"]

Occasionally, the nesting is a few levels deep, as a combination of dicts and lists:

oneTweet["entities"]["urls"][0]["url"]

2.5 Your Turn to read/write JSON¶

Using the variable statesDct above, write the following pieces of code (in separate Notebook cells)

- a for loop that creates a new dictionary containing the pairs state:population for state names that start with a vowel, named

vowelStatesDict - code that saves this dictionary as JSON file: vowelStates.json

- code that reads this JSON file into a dictionary

vowelStatesDict2and compares it tovowelStatesDict1

This is the dictionary that you should expect to be created:

{'Alabama': 4921532,

'Alaska': 731158,

'Arizona': 7421401,

'Arkansas': 3030522,

'Idaho': 1826913,

'Illinois': 12587530,

'Indiana': 6754953,

'Iowa': 3163561,

'Ohio': 11693217,

'Oklahoma': 3980783,

'Oregon': 4241507,

'Utah': 3249879}# Write the for loop to create the vowelStatesDict

# Your code here

vowelStatesDict = {}

for state in statesDct:

if state[0] in 'AEIOU':

vowelStatesDict[state] = statesDct[state]

vowelStatesDict

# Write code to save this dictionary into a JSON file

# Your code here

import json

with open("vowelStates.json", 'w') as outputF:

json.dump(vowelStatesDict, outputF)

Test that your code worked:

more vowelStates.json

# Write code to read the dictionary from the JSON file into a new dictionary, vowelStatesDict2

# Your code here

with open("vowelStates.json", 'r') as inputF:

vowelStatesDict2 = json.load(inputF)

vowelStatesDict2

Test that your code worked:

vowelStatesDict == vowelStatesDict2

3. Working with CSV files¶

A CSV (comma-separated values) file is a file within which the values are separated by commas. An appropriate software like Excel can display this data as a table of rows and columns. However, a CSV file is a text file, thus, we can read its content similarly to all text files we have seen so far, with the simple twist that we need to split values at commas. Below is a function that does that:

def tuplesFromFile(filename):

'''Read each line from opened file,

strip white space,

split at commas,

convert as tuple and

return a list of tuples.

'''

with open(filename, 'r') as inputFile:

theTuples = [tuple(line.strip().split(',')) # a list comprehension

for line in inputFile]

return theTuples

Let's read the content of the file us-states-more.csv:

statesData = tuplesFromFile("us-states-more.csv")

len(statesData)

There are 51 entries, because the first one is the tuple with the column names:

statesData[:4]

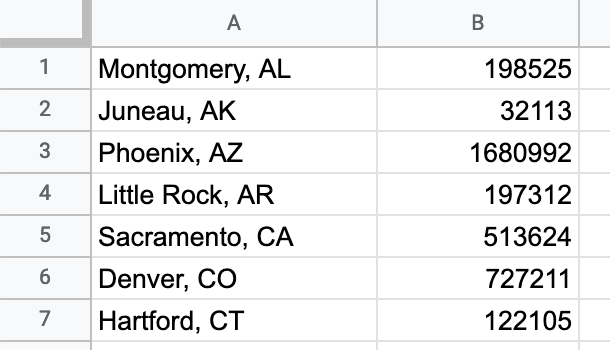

There are so many things we can do with this data, but for the moment, we will try something simple: create a CSV file that will look like this:

Let's first create the data that looks similar to what the file should contains:

capitals = [] # new list to store tuples of ("Capital, Abbr", Population)

for _,_,abbr,cap,capPop in statesData[1:]: # notice the tuple assignment, since each line is a tuple

# also, we're skipping the row at index 0

capitals.append((f"{cap}, {abbr}", capPop)) # use the f-string to join two values into a string

capitals[:4] # show the first four items

This worked well. Now we can store this data into a CSV file:

with open("capitals-only.csv", "w") as outF:

for item in capitals:

row = f"{item[0]},{item[1]}\n" # create the row to write in the file

outF.write(row)

more capitals-only.csv

The data is stored. Will our function tuplesFromFile be able to correctly read this file?

capitals2 = tuplesFromFile("capitals-only.csv")

capitals == capitals2

We didn't get back the same content that we put in. That is cause for concern. Let's look at each list separately:

capitals[:3]

capitals2[:3]

We can see what happened: our function tuplesFromFile has split the values at the comma, and created a three-element tuple, instead of the two-element tuple we wrote into the file. This means that every time we want to read from a new CSV file, we will have to write a new function that has to take into account the nature of the data. This is time-consuming.

Similarly to the situation we encountered above with the dictionaries, Python has a solution for this, the module csv, which knows how to deal with such situations, where commas can have a dual role: part of a string and separators of values.

4. The csv module to work with CSV data¶

The csv module has many useful functions that create objects that know how to perform certain operations. These objects are similar to the file objects we learned about a few weeks ago.

csv.reader--> an object that knows how to read a file object line by line. It returns a list of lists.csv.writer--> an object that knows how to write lists into a file object.csv.DictReader--> an object that knows how to read a file object into a list with each element as a dictionary.csv.DictWriter--> an object that knows how to write into a file object a list of dictionaries.

import csv

4.1 Using csv.reader and csv.writer¶

Let's first try to write out capitals data using the appropriate method:

with open("capitals-fixed.csv", "w") as outF:

writer = csv.writer(outF) # create an csv.writer object tied to the file object opened for writing

writer.writerows(capitals) # call method writerows to write a list into the file object

What kind of value is writer?

type(writer)

To check that the data were written correctly, let's examine the ouptut file:

more capitals-fixed.csv

IMPORTANT: Note that each element in the first column is a pair of (1) capital and (2) state abbreviation that is explicitly quoted. The quotation marks effectively say "don't intepret commas inside the quotes as separating column values".

To check that the data were written correctly, we also want to read them:

with open("capitals-fixed.csv", "r") as inF:

reader = csv.reader(inF) # create a csv.reader object tied to the file object opened for reading

capitals3 = list(reader) # the function list forces reader to iterate and read its content

# this is similar to what we do to the range() object.

capitals == capitals3

Hmm, something is still not right. Let's check what was read:

capitals3[:4]

This is a list of lists (each with two elements). However, as we saw above, capitals is a list of tuples. These are not the same. But, we can convert lists to tuples as below:

with open("capitals-fixed.csv", "r") as inF:

reader = csv.reader(inF)

capitals4 = [tuple(row) for row in reader] # read each row and convert it to a tuple

capitals == capitals4

Now we have the right answer! The commas within the first column were automatically taken care of by csv.writer and csv.reader.

Write the column names as the first row¶

The file we wrote only contains the values, not the names of the columns. Usually, we want to have the first row dedicated to columns, because we will share the file with other people. The csv.writer object has a method writerow, that allows us write individual rows. Let's try it out:

with open("capitals-column-names.csv", "w") as outF:

writer = csv.writer(outF) # create an instance of writer object

writer.writerow(["Capital, Abbr", "Population"]) # call method writerow to write a single row

writer.writerows(capitals) # call method writerows to write a list into the file object

more capitals-column-names.csv

4.1.1 Your turn: Read with csv.reader¶

- Use

csv.readerto read the content of the file us-states-more.csv. - Save the content of the file into the variable

us_statesand print out the first five rows.

# Your code here

with open("us-states-more.csv", 'r') as inputF:

reader = csv.reader(inputF)

us_states = list(reader)

print(us_states[:5])

4.1.2 Your turn: Write with csv.writer¶

- From the variable

us_states, create a list of lists, namedonly_statesthat is made up of two elements, statename and abbreviation, for example, ['Alaska', 'AK']. - Write the file

states-only.csvusingcsv.writer.

# Your code here

us_states = [[line[0], line[2]] for line in us_states]

print(us_states[:3])

with open('states-only.csv', 'w') as outputF:

writer = csv.writer(outputF)

writer.writerows(us_states)

Check that the file was written:

more states-only.csv

4.2 Using DictReader¶

Now that our CSV file has a column name, we can use the more advanced DictReader object to read the data:

with open("capitals-column-names.csv", "r") as inF:

dctReader = csv.DictReader(inF) # create an object of the csv.DictReader tied to the file object

dctRows = list(dctReader) # read the content of dctReader, forcing it to iterate

len(dctRows)

Even though we added an extra row to the file to specify the column names, we still received 50 rows from the file, what happened to our row?

oneRow = dctRows[0]

oneRow

This is a more advanced kind of the dictionary data structure, which shows its data as tuples of key,value pairs. We can use key indexing as with other dictionaries:

oneRow['Population']

Let's use this information to calcuate the total number of people who live in the US State capitals:

totalSum = 0

for row in dctRows:

totalSum += int(row['Population'])

totalSum

The previous code can be written more succinctly with a list comprehension:

totalSum = sum([int(row['Population']) for row in dctRows])

totalSum

4.3 Using DictWriter¶

What is the advantage of using DictWriter? It gives us control about how to order the columns. To see this in action, we will use our original file us-states-more.csv, which we stored in statesData.

statesData[:4]

To use DictWriter our data needs to be stored as a list of dictionaries, right now it's a list of tuples. The code below does the conversion, do not worry about it right now, we will discuss it again next time.

statesList = [] # this is store our list of dictionaries

for row in statesData[1:]:

statesList.append(dict(zip(statesData[0], row)))

statesList[:2]

We now have the list of dictionaries that we need. Additionally, let's specify the new order of columns:

columns = ['Abbrev.', 'State', 'Capital', 'CapitalPop', 'StatePop']

with open("us-states-rearranged.csv", "w") as outF:

writer = csv.DictWriter(outF, fieldnames=columns)

writer.writeheader() # this method doesn't need an argument, it uses the fieldnames parameter

writer.writerows(statesList) # writes the list of dictionaries into the file object

more us-states-rearranged.csv

We successfully rewrote the structure of our files with very little effort. That is the power of Python modules.

5. Exercises¶

5.1 Ex. 1: Read and write a CSV file¶

You are given the file south_america.csv that contains two columns: country names and capital names for 12 sovereign states in South America. Using what we learned about reading and writing CSV files, you will write funtions that:

- read the content of the CSV file

- swap the order of the colums

- write the swapped columns into a new file

- return a list of rows

There are at least two different approaches to write this function.

- using

csv.readerandcsv.writer - using

csv.DictReaderandcsv.DictWriter

You should write swapColumns1 and swapColumns2 to correspond to the use of these listed approaches. Store the swapped columns into files as "swapped_columns1.csv" and "swapped_columns2.csv".

Both methods can take the name of the file as a parameter, for example:

def swapColumns1(filename)

Let's see the content of south_america.csv first:

more south_america.csv

Function swapColumns1

# Your code here

def swapColumns1(filename):

"""A function that reads a CSV file and swaps the columns,

making use of csv.reader and csv.writer.

"""

# Step 1: read the file

with open(filename, 'r') as inF:

reader = csv.reader(inF)

rows = list(reader)

# Step 2: swap the columns with list comprehension

swappedRows = [(row[1], row[0]) for row in rows]

# Alternative approach using tuple assignment:

# swappedRows = [(row1, row0) for (row0, row1) in rows]

# Step 3: write into new file

with open("swapped_columns1.csv", 'w') as outF:

writer = csv.writer(outF)

writer.writerows(swappedRows)

# return the swapped data

return swappedRows

data1 = swapColumns1("south_america.csv")

Check the newly created file to make sure it's correct.

more swapped_columns1.csv

Check the data in the returned variable:

data1

Function swapColumns2

# Your code here

def swapColumns2(filename):

"""A function that reads a CSV file and swaps the columns,

using csv.DictReader and csv.DictWriter.

"""

# Step 1: read the file

with open(filename, 'r') as inF:

dctReader = csv.DictReader(inF)

rows = list(dctReader)

# Step 2: find the column names by looking up the first row and swap them

columns = list(rows[0].keys())

swappedColumns = columns[::-1] # a quick way to reverse the list

# Step 3: write to the new file

with open("swapped_columns2.csv", 'w') as outF:

dctWriter = csv.DictWriter(outF, fieldnames=swappedColumns)

dctWriter.writeheader()

dctWriter.writerows(rows)

# Step 4: return the data (we didn't swap them, since the swapping is done by the csv.DictWriter

return rows

data2 = swapColumns2("south_america.csv")

Let's check the new file:

more swapped_columns2.csv

Let's check the returned value:

data2

The dicts in this list were not swapped, because order doesn't matter in a dictionary. We can access the values in the order we want.

for row in data2:

print(f"{row['Capital']}, {row['Country']}")

5.2 Ex 2: Aggregate values of a given column¶

Write a function totalPopulationOf that takes two parameters: 1) a filename; 2) a column name (e.g., StatePop, CapitalPop) and returns the total value in the column with the provided name. This is a good example to use DictReader, because each row will be read as a dictionary with column names as keys.

Here are two examples of calling the function:

In[]: totalPopulationOf("us-states-more.csv", 'StatePop')

Out[]: 328771307In[]: totalPopulationOf("us-states-rearranged.csv", 'CapitalPop')

Out[]: 13584723Notice that we are calling the same function, but with different files, and different column names.

# Your code here

def totalPopulationOf(filename, columnname):

"""A function that reads a CSV file and sums all values of a given column.

"""

totalPop = 0

with open(filename) as inputF:

dctReader = csv.DictReader(inputF)

for rowDct in dctReader:

totalPop += int(rowDct[columnname])

return totalPop

# Alternative solution using `sum` with list comprehension:

# def totalPopulationOf(filename, columnname):

# with open(filename, 'r') as inputF:

# return sum([int(rowDct[columnname])

# for rowDct in csv.DictReader(inputF)])

totalPopulationOf("us-states-more.csv", 'StatePop')

totalPopulationOf("us-states-rearranged.csv", 'CapitalPop')

Digging Deeper¶

These values are a bit hard to read and compare, becuase there are no separators for digits in the numbers. We can do that with the f-string:

total = 328771307

print(f"{total:,}") # the syntax for the f-string this time contains :, which is an expression

# that in this context means "use the comma as part of the number format"

The expression for formatting the number can be expanded to contain more information:

total = 328771307

print(f"{total:,.2f}") # in addition to using the comma, add 2 digits after the decimal point

Ex 3: Read from a text file into a dictionary¶

At the beginning of the notebook we showed the file us-states.txt that contained sentences of the form:

Alabama is home to 4921532 people.

Alaska is home to 731158 people.

Arizona is home to 7421401 people.Your task is to write the function readStatesFromFile that takes one parameter, the name of the file and returns a dictionary of the form:

{'Alabama': 4921532,

'Alaska': 731158,

'Arizona': 7421401,

...

}Note: Careful, some US States have two parts, such as, New York, West Virginia, etc.

# Your code here

def readStatesFromFile(filename):

"""A function that reads a file containing sentences that

contain data, extracts the data and returns them as a dictionary.

"""

statesAsDct = {}

with open(filename, 'r') as inputF:

for line in inputF:

words = line.split()

if len(words) == 6:

state, population = words[0], int(words[4])

elif len(words) == 7:

state, population = " ".join(words[0:2]), int(words[5])

statesAsDct[state] = population

return statesAsDct

statesDct = readStatesFromFile("us-states.txt")

print(statesDct)

This is all for today!